explicitly in existing methods of radial distortion correction for a very long time. However, such differential constraints have been never considered. For a given real camera, no matter what function is selected, its innate mapping of radial distortion is smooth, and the signs of its first and second order derivatives are fixed. Many radial distortion functions have been presented to describe the mappings caused by radial lens distortions in common commercially available cameras. Generalizes the classical and Pansu derivatives).

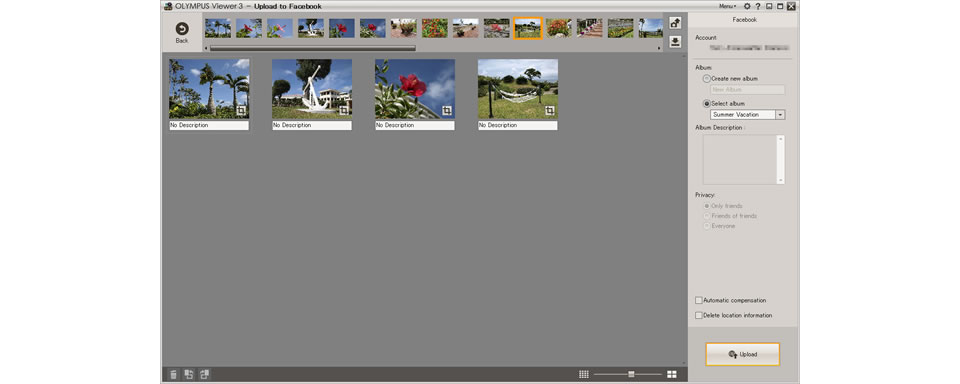

The pullback is defined using the Margulis-Mostow derivative (which The conformal structure is specified only on the horizontal distribution, and Measurable conformal structure which is invariant under such a semigroup. The second part of the paper we follow Tukia to prove the existence of a One may then study the quasiregular semigroup generated by a UQR mapping. Sub-Riemannian spheres using quasiconformal flows, and an adaptation of this The proof is based on a method for building conformal traps on So, we also obtain new examples of UQR maps on the standard sub-Riemannian Mappings with a uniform bound on the distortion of all the iterates. Nontrivial uniformly quasiregular (UQR) mappings, that is, quasiregular present paper we adopt therefore a metric rather thanĪs a first main result, we prove that the sub-Riemannian lens space admits The same spirit is not available in the setting of general sub-Riemannian On H-type Carnot groups, quasiregular mappings have been introducedĮarlier using an analytic definition, but so far, a good working definition in Respect to the Carnot-Caratheodory distances and discuss several related We study mappings on sub-Riemannian manifolds which are quasi-regular with As a result, the proposed method can be applied to a virtual reality (VR) or augmented reality (AR) camera with two fisheye lenses in a field-of-view (FOV) of 195°, autonomous vehicle vision systems, wide-area visual surveillance systems, and unmanned aerial vehicle (UAV) cameras. Furthermore, our method performs not only on-line correction by using facial landmark points, but also off-line correction described in subsection III-C. The proposed method can automatically select the optimal amount of correction for a fisheye lens distortion by analyzing characteristics of the distorted image using neither prespecified lens design parameters nor calibration patterns. The proposed algorithm consists of three stages: i) feature detection, ii) distortion parameter estimation, and iii) selection of the optimally corrected image out of multiple corrected candidates. To solve these problems, we present a novel image-based algorithm that corrects the geometric distortion. On the other hand, the lens design specifications can be understood only by optical experts. However, the use of a calibration pattern works only when an input scene is a 2-D plane at a prespecified position. To correct the lens distortion, existing methods use prior information, such as calibration patterns or lens design specifications.

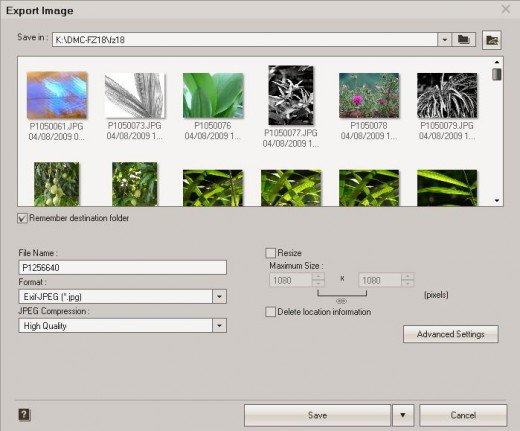

Sorry for my terrible English, I just want to understand how it works.Images acquired by a fisheye lens camera contain geometric distortion that results in deformation of the object's shape. Should I simply apply shader(that translate vertex coordinates of every object from world-space into inverse-lens distorted & screenspace(lens space)) to camera? The second one is about vertex displacement. As I understand, I should firstly render image from game cameras to 2(for each eye) tessellated quads, then apply shader with correction to this quads and then draw quads in front of main camera. The first question is about projection render target onto a warped mesh. I understand how the pixel-based shader works.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed